CARL: Controllable Agent with Reinforcement Learning for Quadruped Locomotion

ACM Transactions on Graphics (Proceedings of SIGGRAPH 2020)

Authors

Luo, Ying-Sheng* and Soeseno, Jonathan Hans* and Chen, Trista Pei-Chun and Chen, Wei-Chao (*denotes joint first authors)

Published

2020/8/12

Abstract

Motion synthesis in a dynamic environment has been a long-standing problem for character animation. Methods using motion capture data tend to scale poorly in complex environments because of their larger capturing and labeling requirement.

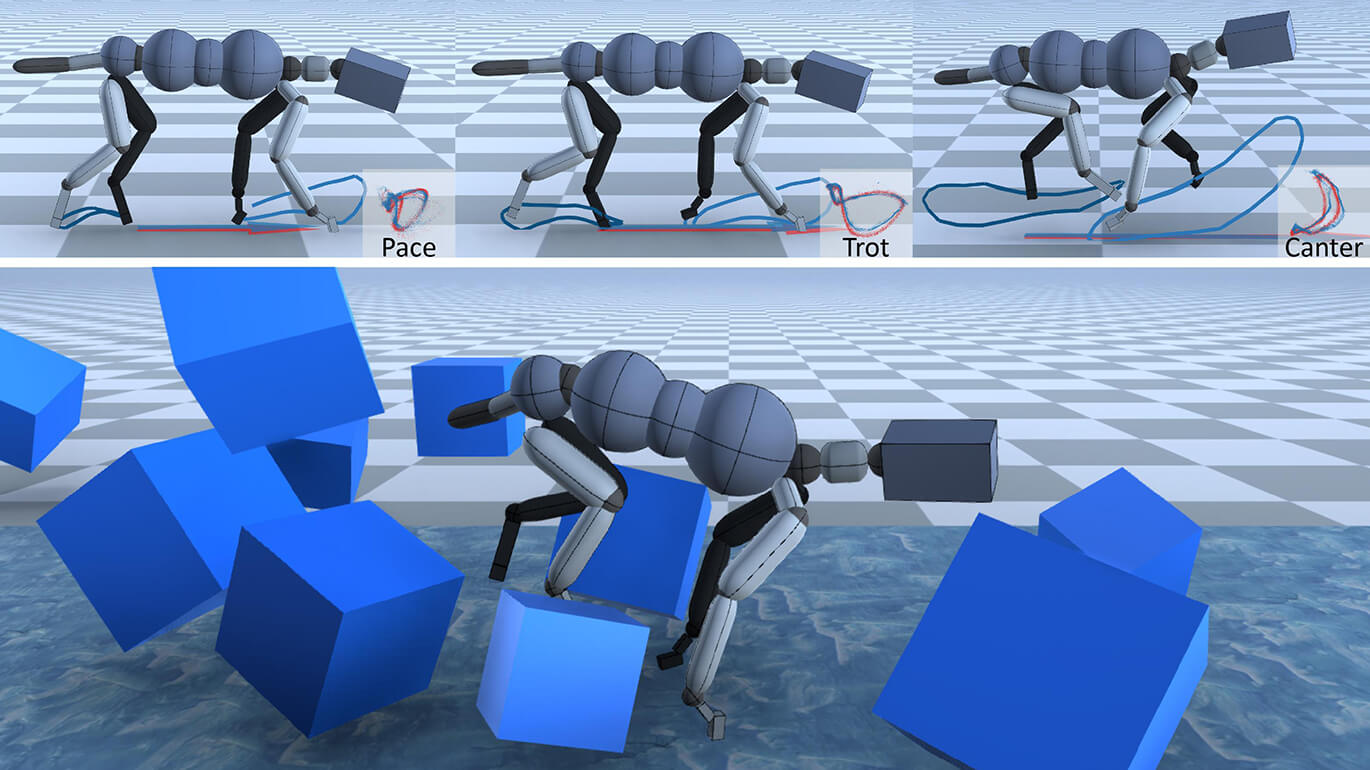

Physics-based controllers are effective in this regard, albeit less controllable. In this paper, we present CARL, a quadruped agent that can be controlled with high-level directives and react naturally to dynamic environments. Starting with an agent that can imitate individual animation clips, we use Generative Adversarial Networks to adapt high-level controls, such as speed and heading, to action distributions that correspond to the original animations.

Further fine-tuning through the deep reinforcement learning enables the agent to recover from unseen external perturbations while producing smooth transitions. It then becomes straightforward to create autonomous agents in dynamic environments by adding navigation modules over the entire process. We evaluate our approach by measuring the agent’s ability to follow user control and provide a visual analysis of the generated motion to show its effectiveness.

Keywords

- Physical Simulation

- Reinforcement Learning